⏩ TL;DR: NB-IoT vs LTE-M vs Cat-1 bis

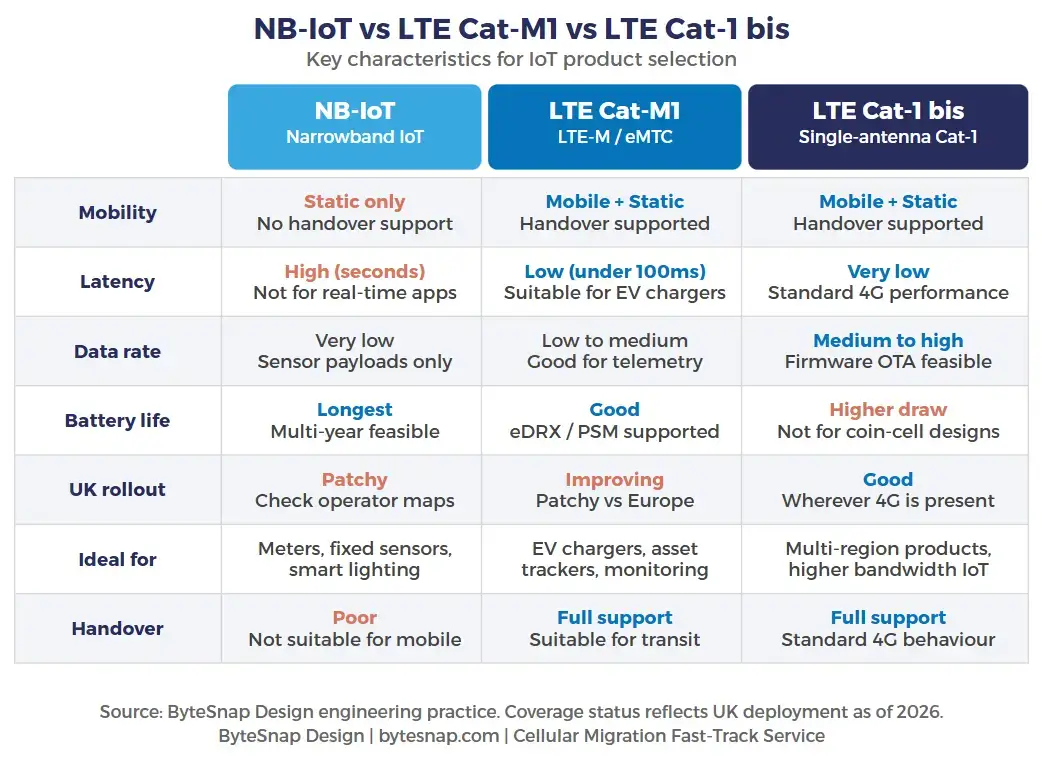

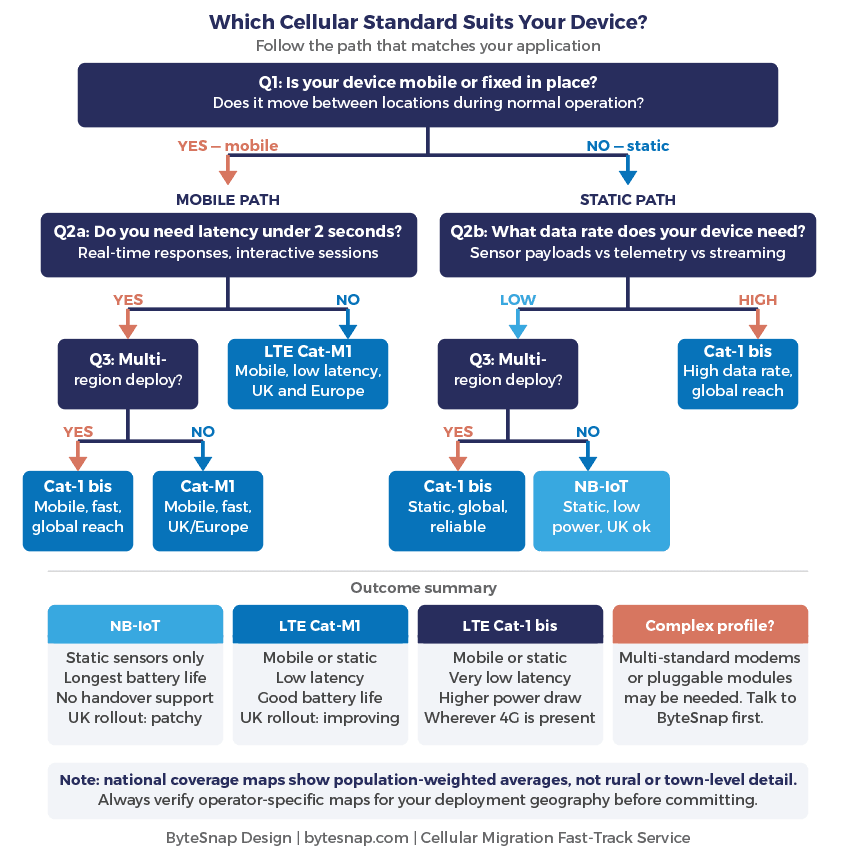

There’s no universal cellular standard that is low power, low latency, low cost, and works everywhere. The right choice depends on four things: whether your device moves, what data rate you need, your latency tolerance, and where in the world it operates. This article walks through each decision in plain terms.

Table of Contents

No standard does everything. Here's how to choose

The 2G and 3G shutdowns have pushed a lot of manufacturers into a cellular redesign they hadn’t planned for.

Most of the conversations I have start the same way: “We need to replace the modem. What should we use?”

That question is harder than it sounds. The replacement landscape for 2G and 3G is genuinely fragmented.

Different countries have retired different networks on different schedules, and the standards replacing them – NB-IoT, LTE Cat-M1, LTE Cat-1 bis, and others – each suit different applications. Choosing the wrong one early means revisiting the design in three years, or shipping a product that doesn’t work where your customers are.

Here are the four questions I ask every time, and what the answers tell you.

Four Questions Before You Pick a Standard

Does your device move?

This is the first filter, and it rules out more options than most teams expect.

NB-IoT has a fundamental limitation: it handles cellular handover poorly. If your device moves between cell towers – on a vehicle, a tracked asset, or anything in transit – NB-IoT will struggle to maintain a connection. It is designed for static devices. Water meters, smart lighting, fixed industrial sensors. Something bolted to a wall or buried in the ground. Put it on a truck, and it becomes unreliable.

LTE Cat-M1 and Cat-1 bis both support proper cellular handover. If your device moves at all, start your shortlist there.

Do you need a response in under 2 seconds?

This is where NB-IoT often gets ruled out for applications that looked like a good fit on paper.

NB-IoT latency is typically measured in seconds, sometimes tens of seconds.

For a water meter reporting daily readings, that is fine. For an EV charger that needs to authorise a session and respond to a user standing in a car park, it is completely unsuitable. No one is waiting fifteen seconds for a reply.

If your application involves any kind of interactive exchange – payment, command-response, real-time monitoring – check the latency spec before you commit to NB-IoT. It disqualifies itself quickly for time-sensitive use cases.

LTE Cat-M1 latency is typically under 100ms. Cat-1 bis is lower still. For anything needing a prompt response, both are workable.

What data rate do you actually need?

This narrows the field further. NB-IoT and LTE Cat-M1 are low-power wide-area standards, built for infrequent, small data payloads.

Sensor readings, status updates, meter data.

If you need to push firmware updates over the air regularly, stream video, or handle high-frequency telemetry, you are looking at Cat-1 bis or higher.

For most industrial IoT applications, the data rate of LTE Cat-M1 is more than adequate.

The temptation to over-specify here is real, and it costs you in power consumption and unit hardware cost.

Where in the world will this device operate?

This is the question teams most consistently underestimate.

Coverage for NB-IoT and LTE Cat-M1 in the UK has been frustratingly slow to roll out compared with much of Europe. National coverage maps from bodies like the GSMA give a useful headline view, but they don’t show you town-by-town or rural coverage gaps. Network operators publish more granular maps, and you need those for your specific deployment geography before committing to a standard.

LTE Cat-1 bis operates wherever 4G exists, making it the most geographically reliable choice for multi-region products. The trade-off is higher power draw and a higher unit cost than the LPWA standards.

If your product ships globally and you cannot guarantee which specific networks will be available, Cat-1 bis is often the pragmatic choice – even if it isn’t the most elegant one on paper.

Try the Standard Selector

Not sure which standard fits your profile? Use the tool below.

Four questions, plain-English output, and an honest flag if your requirements are complex enough to need a specialist conversation first.

Why Coverage Maps Will Mislead You

One thing worth saying plainly: there is no single standard that cleanly replaces 2G everywhere 2G existed.

3G was retired first in many countries precisely because 4G was a like-for-like replacement on the voice and data side. 2G proved harder to switch off because so many installed IoT devices still depend on it, and the LPWA standards that logically replace it for IoT have rolled out unevenly.

The practical consequence is that a product designed for one market may need a different modem configuration entirely for another. We have had projects where a device worked correctly in the UK and failed the moment it crossed into Ireland. Not because of a hardware fault, but because of how that specific SIM interacted with the local network configuration. That kind of issue surfaces only in field testing, and it is expensive to discover late.

If your deployment spans multiple regions, multi-standard modems are worth serious consideration. They cost more and consume more power, but they give you flexibility a single-standard design cannot match. Some manufacturers go further and use pluggable modem modules – physically socketed units that can be swapped for different regional variants without a board redesign. It is an expensive approach, but where BOM cost is secondary to operational flexibility, it removes a meaningful risk.

One other thing to check early: export restrictions

Certain modem chipsets face restrictions on sale into the US market. If your product has any chance of reaching North America, verify your shortlisted modules are exportable before you design them in.Certain modem chipsets face restrictions on sale into the US market. If your product has any chance of reaching North America, verify your shortlisted modules are exportable before you design them in.

Where Retrofits Actually Go Wrong

1: Antenna and RF mismatch

Your existing antenna is tuned for specific frequency bands. 2G in the UK operated on 900 MHz and 1800 MHz. Modern LTE standards require coverage across 800 MHz, 1800 MHz, and 2600 MHz at minimum, and some modules support fifteen or more bands.

An antenna optimised for 900 MHz will not perform adequately across this range. Even a moderate impedance mismatch means significant power being reflected back into the module rather than radiated, which degrades range, increases retries, and causes problems at certification.

We have seen range drop by 40% on direct antenna reuse. Fixing mismatch requires RF test equipment and expertise that most embedded teams don’t keep in-house, and a single failed certification attempt typically costs £15,000 to £25,000.

2: Peak current supply

Cellular transmission involves bursts of high current draw. A legacy 2G module might draw 2A peak; an LTE replacement can draw 2.8A or more.

In battery-powered products without sufficient supply margin, this causes brownouts during transmission bursts – intermittent resets that appear only in the field, under real battery conditions, not on a bench supply.

It is one of the more frustrating faults to diagnose because it doesn’t reproduce reliably in the lab.

3: Firmware and modem driver rework

The AT command interface is the software layer your firmware uses to control the modem. It varies between manufacturers and sometimes between module families from the same vendor.

Your existing firmware carries years of accumulated logic specific to your old module – registration sequences, connection handling, error recovery paths.

None of this translates cleanly to a new module, even a physically pin-compatible one.

On embedded Linux platforms, proper modem integration also requires kernel-level driver work: USB interface configuration, power management integration, and data path setup.

Teams that underestimate this piece are typically the ones still debugging eight months in.

Choosing a standard? Before you commit…

Five Questions to Answer Before You Pick a Standard

Work through these before committing to a module. Each answer narrows the field significantly.

| Question | Why it matters |

|---|---|

| Is your device mobile or fixed in place? | If it moves between locations, NB-IoT is ruled out immediately. It does not support cellular handover between towers. |

| Do you need a response in under 2 seconds? | NB-IoT latency is typically measured in seconds, sometimes longer. EV chargers, payment terminals, and command-response applications need Cat-M1 or Cat-1 bis. |

| What data rate does your application actually need? | Most sensor and telemetry use cases fit comfortably within Cat-M1. Over-specifying here costs you in power draw and hardware unit cost. Only reach for Cat-1 bis if you genuinely need the higher throughput. |

| Which countries will this device operate in? | NB-IoT and Cat-M1 rollout is patchy in the UK and varies significantly by country. Cat-1 bis operates wherever 4G is present. Multi-region deployments often need a multi-standard modem or a fallback strategy. |

| What are the lead times on your shortlisted modules? | Check with distributors before finalising your BOM. Where lead times are a risk, design the PCB to accept a footprint-compatible alternative from a second vendor. |

If your answers point in different directions, for example a mobile device but patchy Cat-M1 coverage in your target region, you likely need a multi-standard modem or a specialist conversation before committing to hardware. ByteSnap's Cellular Migration Fast-Track service starts with exactly this kind of scoping.

A few things worth considering before you lock the design

The newer LPWA standards genuinely enable products that weren’t feasible before. Very low power consumption opens up battery-operated connected sensors in locations where battery replacement is difficult or expensive. Rail-side monitoring is a good example – putting someone near a live track line carries its own costs and complications, so anything that extends service intervals has real operational value.

ATEX-rated connected sensors in hazardous atmospheres are another area where the improved power profiles of NB-IoT and Cat-M1 are making previously impractical designs viable.

On the supply chain side, check modem lead times before finalising your design choice. We have seen lead times extend significantly on specific modules, and discovering this on production lead-in is not a good position to be in.

Talk to distributors early. Where possible, design the PCB to accept footprint-compatible alternatives so you have options if your primary choice becomes difficult to source.

And if you are doing a redesign anyway, it is worth asking whether the new standard could improve the product, not just maintain it. Switching from 2G to Cat-M1 or NB-IoT is not just a compliance exercise.

You may be able to extend battery life substantially, reduce unit cost, or unlock functionality that simply wasn’t possible on the old network.

Book a Cellular Migration Assessment

NB-IoT vs LTE-M vs Cat-1 bis FAQs:

Can I just use whichever standard has the best UK coverage map?

National coverage maps show population-weighted coverage, not land area. A standard showing 95% UK population coverage may still have significant gaps in rural locations. Always check operator-specific detailed maps for your actual deployment geography before committing.

Why is LTE Cat-M1 rolling out more slowly in the UK than in Europe?

Partly spectrum allocation, partly commercial prioritisation. Some European operators invested earlier in Cat-M1 infrastructure. The UK picture has improved, but coverage remains patchy in areas where it is widely available on the continent. It is one reason we often retain a 2G fallback in UK Cat-M1 designs.

Is 5G relevant for most IoT applications?

For most industrial IoT use cases, not yet. 5G is expensive, draws more power, and the coverage advantage over 4G LTE for IoT applications is minimal in most deployments. Where it does make sense is high-bandwidth, low-latency applications – live video inspection, first-person drone operation, high-density connected infrastructure. For sensor data and telemetry, LTE Cat-M1 or Cat-1 bis will serve you better for the foreseeable future.

When is 2G being switched off in the UK?

Ofcom has indicated 2G decommissioning is expected by 2033, though individual operators may act earlier. That is enough time to manage a transition properly — but not enough time to keep deferring it.

How long does a cellular migration project typically take?

Around six months is a realistic estimate for a straightforward redesign, assuming you go in with clear geography and application requirements. Certification is usually the longest single phase. If your project is running longer than that, the most common reasons are antenna rework discovered late and certification failures that weren’t anticipated in the schedule.

Dunstan is a chartered electronics engineer who has been providing embedded systems design, production and consultancy to businesses around the world for over 30 years.

Dunstan graduated from Cambridge University with a degree in electronics engineering in 1992. After working in the industry for several years, he co-founded multi-award-winning electronics engineering consultancy ByteSnap Design in 2008. He then went on to launch international EV charging design consultancy Versinetic during the 2020 global lockdown.

An experienced conference speaker domestically and internationally, Dunstan covers several areas of electronics product development, including IoT, integrated software design and complex project management.

In his spare time, Dunstan enjoys hiking and astronomy.

Expand your knowledge

- Stalled Cellular Migration? 3 Reasons for Project Delays

- GSMA Mobile IoT Deployment Map — worldwide standard rollout status by region

- Ofcom: 2G and 3G Network Sunsets

- ByteSnap Cellular Migration Fast-Track Service https://www.bytesnap.com/design-solutions/cellular-migration-fast-track/

- 88% of Manufacturers Face Industrial IoT Obsolescence Risks — research-backed context on the scale of the cellular migration challenge: https://www.bytesnap.com/news-blog/industrial-iot-obsolescence-risks/

- Embedded Wireless Design 2026 Trends — includes guidance on LTE-M vs NB-IoT vs 5G RedCap for IoT products launching in 2026: https://www.bytesnap.com/news-blog/embedded-wireless-design-2026-trends/

- 1Global: 2G and 3G Sunsetting and What it Means for IoT — operator-level context on network shutdown timelines globally: https://www.1global.com/blog/iot/2g-and-3g-sunsetting-and-what-it-means-for-iot

- Onomondo: AT Commands Guide for IoT Devices — technical deep-dive into cellular AT command management: https://onomondo.com/blog/at-commands-guide-for-iot-devices/

- Taoglas: What’s New in Cellular Certification — PTCRB Updates — current PTCRB pre-certification guidance: https://www.taoglas.com/blogs/whats-new-in-cellular-certification/

- Farnell: Optimised Power Scheme for IoT Battery Packs — supercapacitor buffer design for NB-IoT peak current management: https://uk.farnell.com/optimized-power-scheme-reference-design-avoids-overdesign-of-iot-battery-packs-trc-edar