Radio Systems: Key considerations for your IoT product design

Communication is key

Connectivity is the basis of IoT devices. They all communicate with other products to some extent. While some use wired connections, such as Ethernet, most operate on wireless protocols and require a radio system.

Choosing the right system is a lengthy process that is well worth the trouble, as the ability of the final product to receive and transmit data hinges on this crucial step.

Here is an analysis of nine factors to consider when choosing a radio system for your next IoT device – whether it is tiny or massive – to help you make the most educated choice possible.

These factors are:

- Battery Life

- Range

- Size

- Unit Cost

- Regulations

- Development cost

- Data rate

- Interoperability

- Topology

Battery Life

While IoT devices are not required to be wireless, most are and also depend on a battery or similar finite energy source for power. Additionally, customer expectation is often that the battery does not need changing frequently. You can learn more about reducing power in low power wireless devices here.

As consumers, we tend to purchase new devices to make life easier and convenient. Daily battery changes would be expensive and completely nullify the benefits of the device.

IoT devices transfer data as a matter of course. However, this is not typically large amounts of data and often this transmission is infrequent.

The radio system impacts the battery mostly through receiver power. This may seem counter-intuitive, as the transmitter uses more power when active. But the catch is that the transmitter is only active when transmitting – naturally – and this is not a lot of time. The receiver, on the other hand, can be active for extended periods while waiting for information.

Therefore, to minimise power usage, the receiver needs to be active less. Some radio systems specifically take this into account, and are designed to have receivers that only turn on at scheduled intervals and mesh together in the network to coordinate activity.

Unfortunately, only a few options have this kind of technology, and tend to be more expensive. As an engineer, you will most likely need to build this cohesion into your application, so that the receiver simply turns on at sporadic intervals.

This is not to say that transmit power does not impact battery life. However, the power consumption of a transmitter is largely dependent on the output power, which is in turn determined by the range requirement for the device.

For a device with tight power constraints, we would recommend Bluetooth Low Energy if range is a lower priority. Zigbee in endpoint mode is also very efficient. To put this in context a battery powered Zigbee sensor we designed would have a 2 week battery life with the receiver always on, compared to eighteen months with the device turning the receiver on every thirty seconds to “check in” .

GSM and Wi-Fi generally use a large amount of power and either are used in mains-powered systems or are supported by large or rechargeable batteries. That said, some newer Wi-Fi technologies are able to disconnect with the network and rapidly reconnect to reduce the amount of time the radio system is active for.

Range

Range fundamentally comes down to the link budget of the radio. Link budget is the combination of transmit power and receiver sensitivity. These are expressed on data sheets, and are measured in decibels.

A simple link budget equation may look like this:

Received Power = Transmitted Power + Gains – Losses

Decibels are a logarithmic measurement. Therefore, this equation is actually a multiplication and division of the respective ratios.

Examples of gains include amplification from repeaters, while losses include any weakening of the signal due to propagation, and any obstacles.

It is very difficult to predict the range of a device early on in a project, as it is so heavily impacted by the environment, both due to the device and the wider space.

To illustrate the extremity of this impact, a radio tested within an office building could have a range of around 10 metres, but exactly the same system could transmit over a kilometre in an empty, open space.

LoRa (Long Range Radio), a very strong contender for long distance, low-power solutions, has transmitted hundreds of kilometres up to weather balloons. Within a built-up environment, this range can be very quickly reduced to a couple of kilometres.

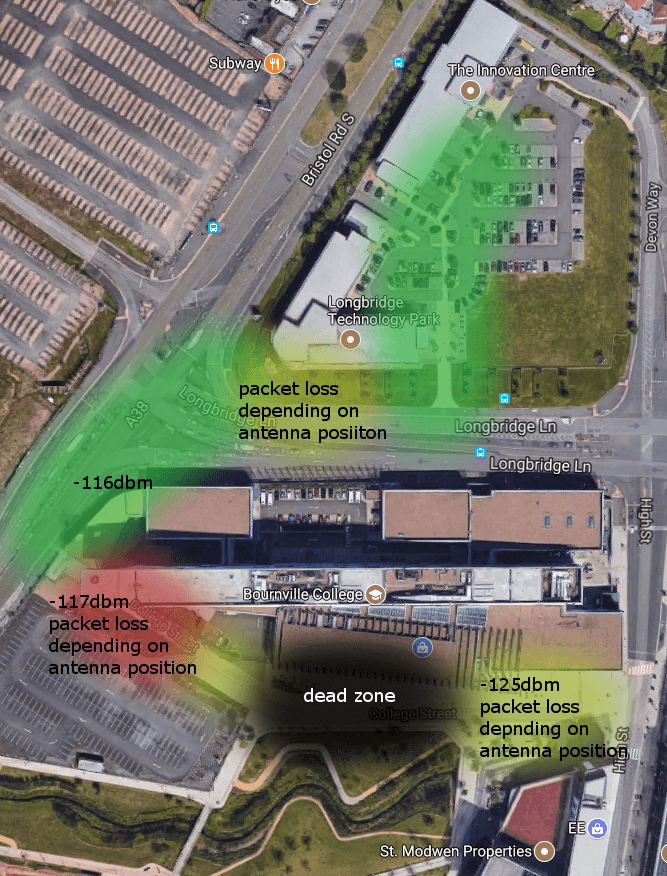

This effect is illustrated in the heat map shown to the right; the distance from the office (Innovation Centre) is roughly equal across the back of the large college building, yet the signal varies significantly, from moderate loss of signal to a complete dead zone.

Receiver sensitivity and transmit power, therefore, need to be considered – with a higher value being better. Tuning antennas can also have a significant impact on the range of the product.

Size

The good news with size constraints is that they are extremely common in the IoT sector. Consequently, there is plenty of choice for tiny radios, especially around Bluetooth. This can be seen in smart watches and other wearables that are Bluetooth-enabled.

However, the bad news is that in practice, the effectiveness of the radio system depends on the antenna, and the recommended antenna size has not decreased at the same rate as the chips. When looking at data sheets for radio chips, the suggested PCB layout often dwarfs the size of the actual chip due to the antenna.

The size of the antenna has a very big impact on range; therefore, the ability of a device to meet size constraints is closely aligned with the range requirement. A smart watch only needs to communicate around 5m maximum, usually sub-1m with a phone in the customer’s pocket.

Achieving high range and a tiny size would be nigh on impossible, simply due to the fact that a small antenna cannot transmit a strong signal over a significant distance.

Radio unit cost

In the same way that market demand has led to great reductions in radio size, the prevalence of radio systems in the market has led to massive competition between chip-makers and a subsequent decrease in price.

Low-energy Bluetooth chips in high volumes can come at around $1 per device, all of which means that keeping unit cost down is achievable without great increases in effort.

The unit cost of the radio is made up of three parts:

- The silicon – in this case, we are assuming the radio is based around an IC with the actual radio module itself built, as opposed to discrete components. Most low power radio ICs cost less than $5 in volume.

- The circuit – which similarly is also very low cost with most low power radio ICs only requiring low cost passive components to complete the design..

- The antenna – the much more variable aspect of the unit, which is turning out to be a very problematic component!

The cheapest option here is a PCB antenna, which is used a lot for Bluetooth applications where range is not a priority. Wire antennas can give good range, but are easily detuned and inconsistent in performance depending on assembly.

Finally, chip and external antennae offer the best performance – and importantly, repeatable performance – but cost more.

Regulations

Establishing target markets and specific countries that the device needs to work in is essential at the outset of the project.

Beyond compliance, regional restrictions limit radio choice.

Some bands – such as the 2.4 GHz band, that Bluetooth, Wi-Fi, and Zigbee use, among others – are global. This reduces the significance of this factor; however, these bands are extremely congested, which is worth considering.

Most other radio bands are region-specific – for example, the EU or the USA.

Once the target countries have been identified, the allotted bands for each region need to be identified. There are often equivalent bands from region to region – for instance, in Europe, devices operating on the 868 MHz band would need to operate on 915 MHz in the Americas.

Having two slightly different bands has no impact on the radio system. A module able to transmit on the 868 MHz band would almost certainly be capable of transmitting at 915 MHz. However, the software will need tweaking, and the circuits may need to be altered slightly to optimise the performance.

In addition, the antennae for each region will need to be tuned differently.

Regulation does not just affect frequency either, it also affects:

- The mark-to-space ratio of the data you can put out

- The percentage utilisation the device can have of the radio band it is in. In the 868 MHz band, this is 1%

- The maximum transmitting power, which in the 868 MHz band is 25 mW

At the end of development, the new product will have to undergo compliance, which can actually be quite a large expense. This is particularly true when modifications have to be made, as then re-runs are required.

It is worth noting that most products require some modifications, and budget for this should always be set aside.

Radio directives

In Europe, IoT devices fall under the Radio Equipment Directive, which you can learn more about here. In the USA, the FCC defines the compliance regulations.

It is important to work out exactly what tests will be required up-front and their cost. Usually, electronics devices will need to undergo some form of emissions testing, as well as radio tests.

Development Costs

Leading on from compliance, development cost is the total expenditure, including staff, services and material from concept to actually launching the product on the market.

For radio, minimal development cost is achieved by designing in an IC with all the development work done. Essentially all that is required is a tuned antenna and then the product is ready.

However, these modules cost significantly more than a chip and, therefore, increase the unit cost of the product.

Despite this, it is not a simple conclusion. Modules come with a range of benefits as well as decreasing the hardware and software work. Many come pre-certified, which means that compliance is reduced, and the risk of failing compliance is also lower, as there are fewer influencing factors.

In particular, for global products, the benefits of reducing compliance cannot be understated, as multiple runs to ensure compliance with several, slightly differing standards is incredibly time-consuming.

To demonstrate the value of modules, we only need to look at laptops, which are high volume and global products and tend to use modules. This proves the point that modules can be cost effective even at high volumes, especially for Wi-Fi and are all but essential for GSM.

The other substantial area for development cost is the actual PCB (printed circuit board) and the RF design around the chip.

Certainly, historically, many radio chips needed careful balancing networks and discrete design around the chip, which requires effort and extensive testing in order to optimise performance. Newer chips incorporate more of this within the device, which reduces – but does not eliminate – the need to optimise the radio’s performance.

As a rule of thumb, for high power radios such as GSM and WiFi, modules are preferable, whilst for low power radios such as Bluetooth or Zigbee, the benefits are more marginal.

Data rate

Data rate demand can vary massively, from, for example, a video application – typically, very high data rate – to a water meter, which may communicate around 10 bytes per day.

When designing IoT devices, the data rate trades off against range and battery life, and finding a compromise here is always necessary.

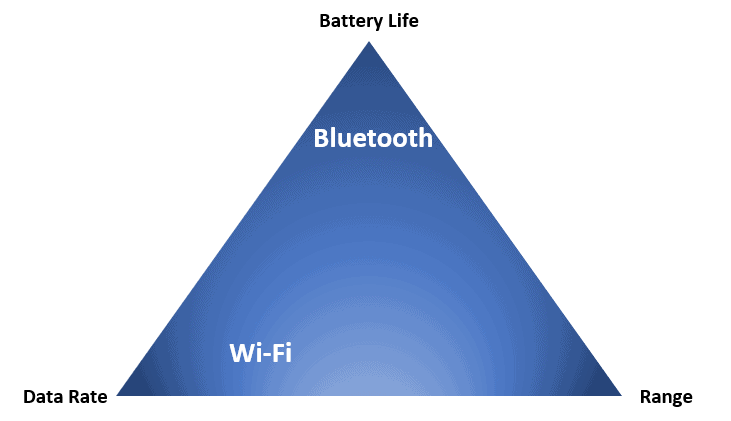

This trade-off is illustrated effectively when comparing Wi-Fi and Bluetooth. As a standard, Wi-Fi operates at 2.4 GHz and delivers an average download rate of 54 Mbps. But, compare this to Bluetooth Classic – which, despite being 2.4 GHz, has a data rate of 1.5 Mbps. This decrease is easily explained by looking at how the technologies are used in practice.

Bluetooth and Wi-Fi are two technologies that operate on different areas of the trade-off triangle.

Data Rate Trade-Off Triangle

Bluetooth is designed for low-cost and low-power designs, and therefore power has been traded off against bandwidth. The technology each protocol is built on also differs, nevertheless fundamentally, the lower data rate is driven by Bluetooth having a different application to Wi-Fi.

High data rate protocols include Wi-Fi, cellular GSM (data rate increases from 2G to 5G). Low-rate options consist of LoRa and Sigfox, to give a couple of examples, and these are also low power.

As an initial step, establishing where the new device concept is positioned on this triangle can eliminate a lot of systems, even if it does not immediately highlight one option.

Interoperability

When designing an IoT product with a radio in, it may need to work with other companies’ products.

If it is a requirement of the device, then this will limit the choice of radio system, as the system used must be able to work on the same frequency and wave band as the target devices.

Furthermore, the device will need ‘profiles’, as they are called, programmed into it. Profiles are essentially the languages that the compatible devices speak.

The best example of where profiles are used is Bluetooth.

Bluetooth has standard profiles for common IoT products, such as keyboards and computer mice. These pieces of equipment will, therefore, work with other products. Zigbee also has several profiles that allow equipment to work with different vendors.

There is a secondary advantage to using profiles and standards that are already in use; the reduction in development and debugging effort. Other businesses will have already invested lots of software development time into producing these communications standards and building them into dev kits, and it will reduce overall costs to make use of these.

In spite of this, it is fairly common for there not to be any interoperability requirements, giving developers greater freedom in their choice of radio system.

Topology

Finally, although topology choice does not tend to restrict radio system choice, some protocols are better suited to one or the other.

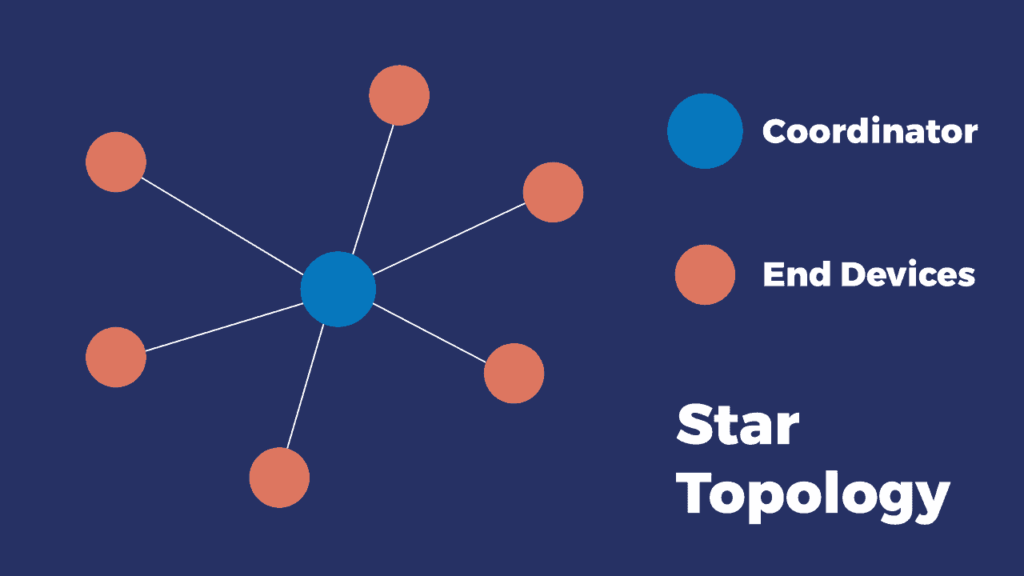

The two classic topologies are star and mesh.

Star

The most recognisable star network in use is a home Wi-Fi network: a central router, often somewhere on the ground-floor and then all devices connect and interface with that one router. Those closest to the router and with the fewest obstacles get the best connection, while in some areas of the house or flat, the signal is quite weak.

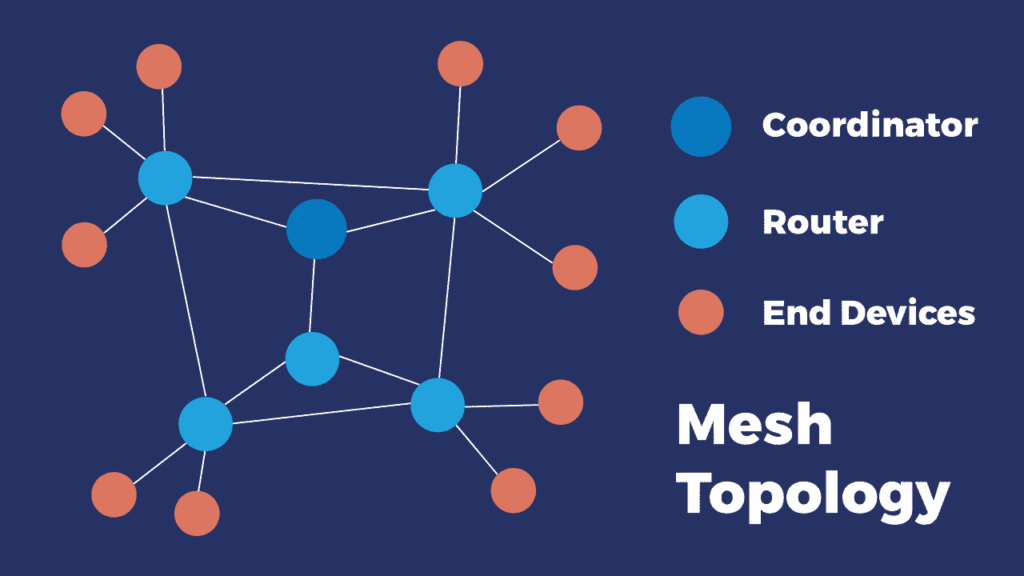

Mesh

A mesh network can still have a central server or router, but lots of devices also interconnect. In the ideal mesh scenario, all devices can interconnect. The best established system that uses a mesh topology is Zigbee.

It’s important here to get feedback for how the product actually performs in its target environment, rather than just on somebody’s desk. Invariably, this brings up issues such as software bugs, maybe some hardware bugs if you’re unlucky. And that all gets fed back into any further iterations of the design.

The basic set-up is that repeaters are situated in areas with strong signal or connected using wired protocols, such as Ethernet. The wireless, mobile devices then relay the data to the repeater instead of directly to the router.

There are several advantages to mesh topologies:

- In a mesh network, each device communicates and relays messages to the nearest device, reducing the transmission power required and extending battery life.

- Mesh networks are ideal for large buildings, as concrete can block a lot of data, and often the distance to the central router will be too great for one device.

However, software development for mesh networks is often more complex and they can become difficult to manage. From a customer perspective, mesh topologies are more challenging to understand and, consequently, more tech support is likely to be required on an ongoing basis.

Star networks are much simpler and customers mostly will understand the principle, but they suffer from the problems that mesh networks solve.

In conclusion, the final choice of radio system for an IoT project will be the culmination of many competing factors. While this article may raise as many questions as it answers, we hope that it provides the framework for a full evaluation of an IoT concept, to then ensure the best radio system is chosen for the device.

Summary

- Consumers typically purchase IoT devices for convenience. Therefore, having a long battery life is essential for most wireless products. In terms of choosing a radio system, this means minimising the length of time the receiver is on for and optimising the transmitter power.

- The range requirement of a device varies massively, and the performance of different radio systems depends on the environment. Standards are often developed to be used over a specific range, and so determining the range requirement for a device will immediately eliminate multiple options

- Size is very closely associated with antenna size, and therefore, range. There is a wide array of tiny radio modules, but a long range invariably requires a larger antenna.

- Unit cost similarly varies the most due to antenna cost, module and circuit board cost will be relatively consistent.

- Working out target markets and subsequently the regulations and conformity requirements that a device will be subject to at the outset of the project is important. This can add up to a significant cost, and must not be underestimated.

- Reducing development costs can be done using pre-certified modules, however these are more expensive, especially for high volume products. For some radio types, e.g. Wi-Fi, pre-certified modules make sense unless at a very high volume.

- Higher data rates can generally be achieved by using a higher frequency standard; however, this is not always the case. A high data rate also will use more power and is more difficult to transmit over a long range. Data rate, battery life and range can be laid out as three points of a triangle which can serve as a useful visual aid to determining priorities.

- If the device needs to be able to talk to other interoperable products, then profiles need to be programmed. Using these profiles can sometimes reduce development effort as they have already been debugged.

- There are lots of different network topologies, the most common of which are star and mesh. Each one solves different problems, and has different advantages.

What next?

If you have any queries on this subject, or any other area of IoT, we would love to hear from you. ByteSnap’s engineers have developed numerous connected devices for a range of applications, and therefore are well-placed to assist with your project. Otherwise, there is more information on our services pages, and numerous other article either below or in news.